Govern everyagent actionbefore itbecomes risk

The sovereign governance and assurance platform for autonomous AI. Every agent action intercepted, risky actions blocked, and every decision explained before it reaches your systems.

See Anchor8 in Action

Watch how Anchor8 governs, audits, and controls autonomous AI agents in real time

Aligned with the AI governance

standards that matter

While agents act,

no one can prove they are safe.

Enterprises are deploying autonomous AI at scale. Without enforcement-grade governance, every deployment is a liability waiting to materialize.

Hallucinations reach production undetected

LLMs fabricate facts, invent citations, and generate plausible-sounding incorrect outputs. Without a detection layer, these reach customers, contracts, and compliance reports.

No explainable audit trail

When an agent blocks a transaction or denies a claim, there is no record of the reasoning. EU AI Act Article 13 requires full explainability for high-risk AI systems starting 2026.

Every competitor is fail-open

If an observability or monitoring tool goes offline, agents keep running without oversight. Risk does not pause for outages. Fail-open is not a governance posture. It is the absence of one.

Capabilities built forTrust

Anchor8 stops risky decisions before they execute. Every agent action is governed and reconstructed across session context, tool calls, and model decisions before it reaches your systems.

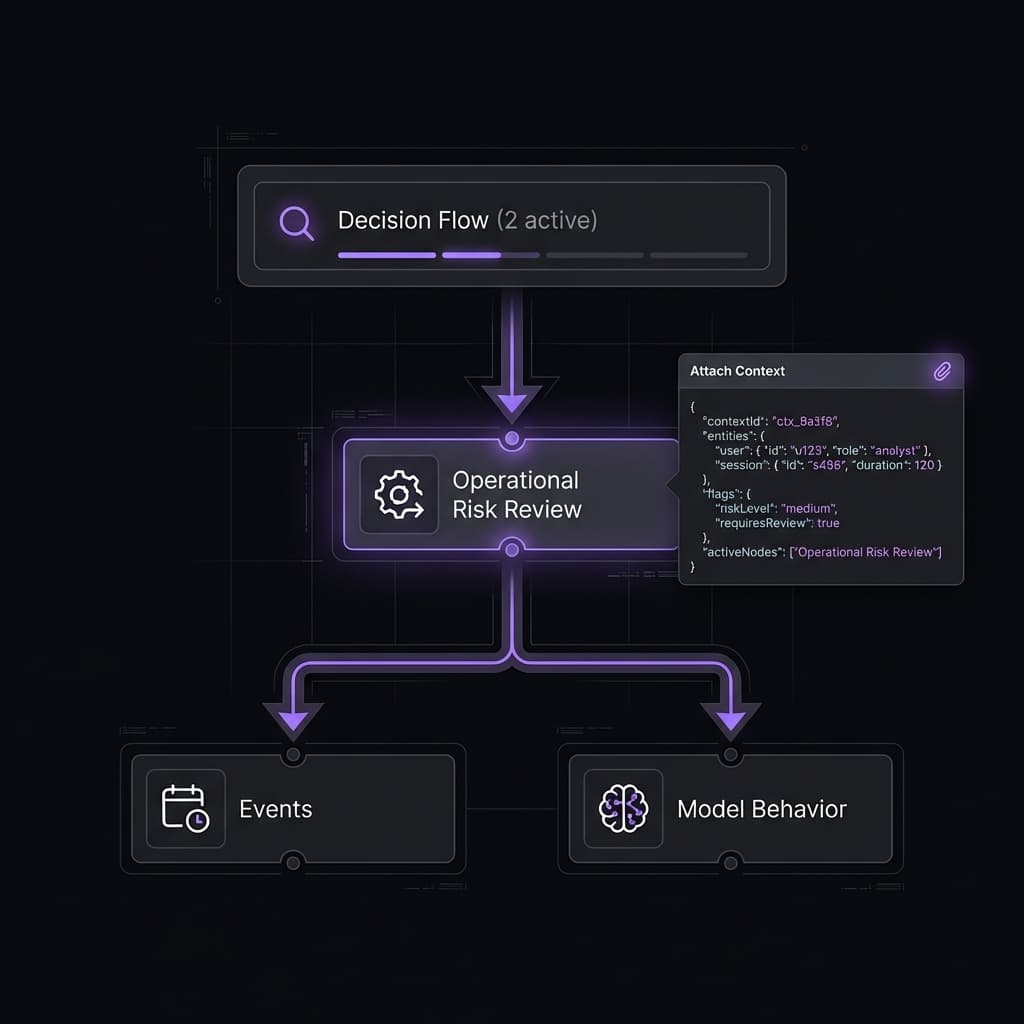

Decision Traceability Engine

Every AI decision is recorded, reconstructed, and explainable — including prompts, tools, context, and intermediate reasoning steps.

Input

Context

Tool Invocation

Decision Output

Model Reasoning

Real-time monitoring

Continuously monitor AI behavior to detect hallucinations, policy violations, bias, and drift before they escalate into incidents.

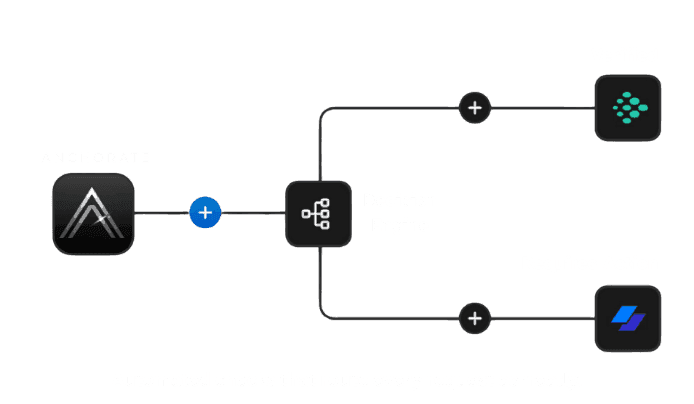

Controlled Integrations

Safely connect AI systems to enterprise tools and data sources while enforcing access controls, permissions, and policy boundaries.

Structured AI Investigations

High-risk decisions automatically trigger structured, multi-perspective investigations that surface root causes rather than assumptions.

|

Governance-Grade Security

Built with enterprise-grade security, encryption, and compliance controls to support regulated environments from day one.

// launch Anchorate portal

anchorate.connect('enterprise');

// trigger secure workflow

anchorate.trigger('secure_session');

// apply policy-based access control

anchorate.enforce('zero_trust');

// monitor session in real time

anchorate.observe('activity_stream');

// finalize secure connection

anchorate.commit();

// launch Anchorate portal

anchorate.connect('enterprise');

// trigger secure workflow

anchorate.trigger('secure_session');

// apply policy-based access control

anchorate.enforce('zero_trust');

// monitor session in real time

anchorate.observe('activity_stream');

// finalize secure connection

anchorate.commit();

Hallucination Guard

Continuously scans every agent output for fabricated facts, invented citations, and statistical drift before it reaches the end user.

Bias and Fairness Review

Flags demographic bias, toxicity, and harmful stereotypes in agent decisions before they execute. Every flag is logged with a full explanation.

Fail-Closed by Design

If Anchor8 goes offline for any reason, all agent actions stop. Governance is never bypassed by an outage. Security is not a best-effort system.

AI that holds up

under scrutiny

Controlled System

Boundaries

Anchorate limits how AI systems interact with tools and data, ensuring every connection respects access controls and governance rules.

More reliable

decisions

Reduction in

decision-related risk.

Designed for

accountability. When AI decisions affect customers, revenue, or compliance, speed alone isn't enough. Anchorate prioritizes traceability, reviewability, and responsibility.

Deploy with

confidence

Decision support,

with accountability

Anchorate assists human decision-makers by surfacing evidence, risks, and alternatives — without removing accountability.

Automated verification

& decision flow

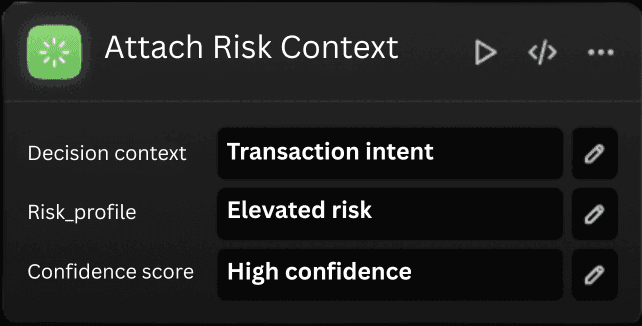

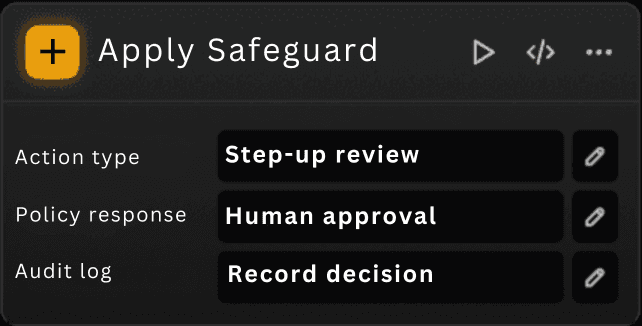

Policy-Aware Execution

Actions are executed only when policies, permissions, and risk thresholds are satisfied — or explicitly approved.

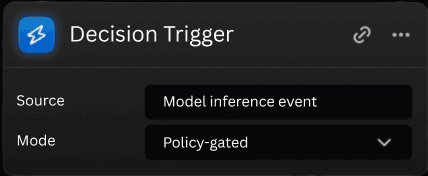

Every action passes through three gates

Fast checks handle ordinary requests. Elevated review handles ambiguous or high-risk actions. No action moves forward without governance.

Lane 1: The Entrance Gate

Initial validationThis is the first filter. Every request is checked for structural validity, trusted identity, and basic execution readiness before any further processing occurs.

Lane 2: The Heuristic Guard

Risk evaluationThis layer evaluates requests for risky patterns, unsafe behavior, and policy conflicts before they reach sensitive systems or trigger irreversible actions.

Lane 3: The Courtroom

High-assurance reviewAmbiguous or high-consequence actions move into deeper review before execution. This is where the platform applies its highest level of scrutiny.

- 1Deeper reviewThe request is examined more carefully when fast checks are not sufficient to establish safety.

- 2Governed outcomeActions are either approved, blocked, or routed for human oversight based on the evidence available.

- 3Full record generatedEvery high-assurance decision produces a traceable record for review, audit, and recurrence prevention.

If Anchor8 goes down for any reason, agent actions are blocked by default. Governance is never bypassed by an outage.

See every risky decision before it lands.

Every dangerous or high-risk action is reconstructed from session context, tool calls, and LLM decisions into a clear record: what happened, where risk appeared, why it was flagged, and what to do next.

Your security team sees the exact action path.

From trigger to interdiction, every risky action is reconstructed into a clean evidentiary record your team can review in minutes.

The action attempted to move from payment recovery into customer data export without matching authorization.

Know your agent.

Revoke it cryptographically.

Universal Agent Identity gives every autonomous agent a persistent W3C DID and signing key. Know Your Agent binds that identity to verifiable claims about owner, model, permissions, safety level, and compliance posture.

Agent Passport

Every critical action is signed by the agent's own key pair and evaluated against its approved safety and capability envelope.

Anchorate issues verifiable metadata about the agent's baseline model, owner, capabilities, compliance flags, and approved transaction thresholds.

UAI is the cryptographic passport for each autonomous agent. KYA is the trust layer that proves who owns that agent, what model it runs, what it can do, and whether it meets your operating standards.

Give every autonomous agent a persistent W3C-aligned identity instead of relying on shared API keys.

Attach verifiable claims about owner, model, permissions, safety tier, and compliance posture before an agent is trusted.

Suspend a rogue or out-of-policy agent instantly with a revocation action that propagates across connected systems.

A way to prove which agent acted, whether it was authorized, and how to disable it instantly if it drifts outside policy.

Enterprise AI adoption fails without trust, auditability, and revocation. UAI and KYA turn agent identity into an enforceable control plane.

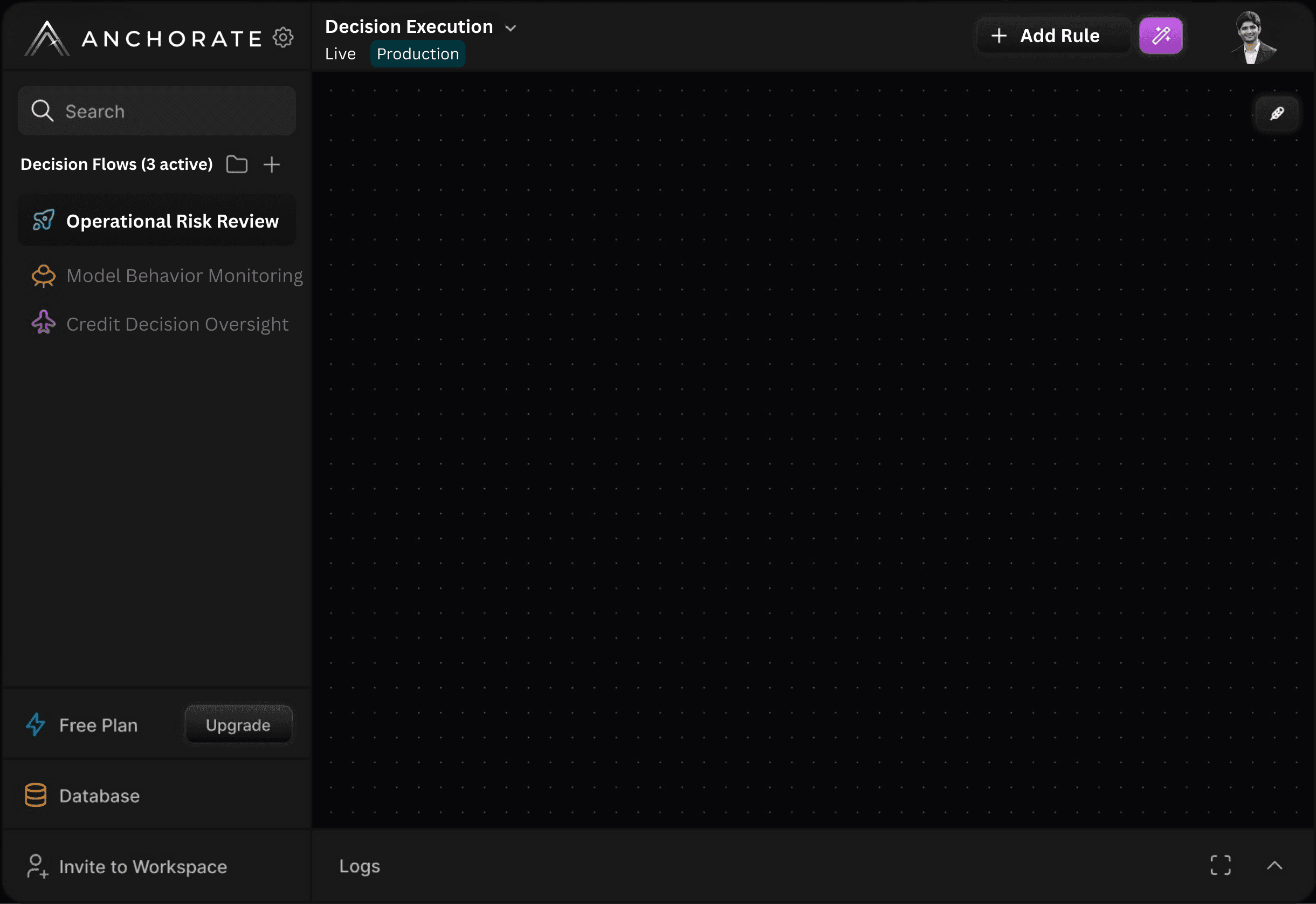

Smart automation

in simple steps

Connect your tools

Plug in your models, agents, and pipelines to continuously observe inputs, outputs, and decisions.

Define guardrails & checks

Configure policies, thresholds, and evaluation logic to detect drift, risk, and non-compliant behavior.

Launch and let us handle it

Let Anchorate automatically surface issues, trigger reviews, and route actions while keeping you in control.

Our results in numbers

100%

Decision traceability

Every decision logged and explainable.

24/7

AI oversight

Continuous monitoring in production.

999

Blind decisions

No action without context.

Choose your plan

From proof of concept to full enterprise rollout. Every tier includes the full governance stack — Lane 1, 2, and 3.

The Pilot

PROOF OF CONCEPT

Time-boxed evaluation for engineering teams. Full access to every core feature, zero commitment.

- 14-day pilot window

- Up to 500 Lane 3 adjudications

- 1 agent integration

- Full PDF compliance reports

- KYA agent identity dashboard

- Basic decision logs

- Dedicated onboarding call

- 1 seat

Governance Suite

ANNUAL LICENSE

Production-grade AI governance for regulated organizations. Built to replace compliance headcount.

- Unlimited Lane 1 and 2 (heuristics)

- 25,000 Lane 3 adjudications per month

- Multi-agent support (up to 10 agents)

- Verified DID identity per agent (did:web)

- Full compliance export (PDF + Chain of Custody)

- Custom heuristic policy rules

- 1-year audit log retention

- Incident replay and visualization

- SLA: 99.5% uptime, p95 under 8s

- Up to 20 seats

- Priority email and Slack support

Variable Courtroom

METERED USAGE

Everything in Governance Suite, plus metered Lane 3 billing for high-volume adjudication at scale.

- Unlimited Lane 3 adjudications (metered per adjudication)

- Parallel LLM reasoning visible in reports

- High Assurance mode (3 jurors for critical decisions)

- Tamper-proof audit history (append-only SHA-256 chain)

- Real-time per-agent usage dashboard

- AI liability insurance add-on

- Custom SLA: 99.9% uptime, p95 under 5s

- Compliance API (machine-readable GRC exports)

- Unlimited seats

All plans include SSO, RBAC, dedicated infrastructure, and a signed Data Processing Agreement (DPA).

Feature comparison

Why teams choose

Anchorate

Generic monitoring tools were built for traditional software. They tell you what happened. Anchorate stops it before it does.

- Blocks actions before execution

- Real-time hallucination detection

- Bias and fairness review

- Adversarial verdict with reasoning

- Cryptographic agent identity (W3C DID)

- Precedent memory across sessions

- EU AI Act aligned audit trail

- Fail-closed by design

- Vendor-independent

- Blocks actions before execution

- Real-time hallucination detection

- Bias and fairness review

- Adversarial verdict with reasoning

- Cryptographic agent identity (W3C DID)

- Precedent memory across sessions

- EU AI Act aligned audit trail

- Fail-closed by design

- Vendor-independent

Partial support denotes logging or post-hoc review only, not real-time blocking.

Frequently asked questions

Everything buyers, operators, and compliance teams need to understand Anchor8.

Something unclear? Reach out anytime.

Ship AI decisions

with confidence

Monitor, govern, and audit AI decisions in real time before they impact users, money, or trust.